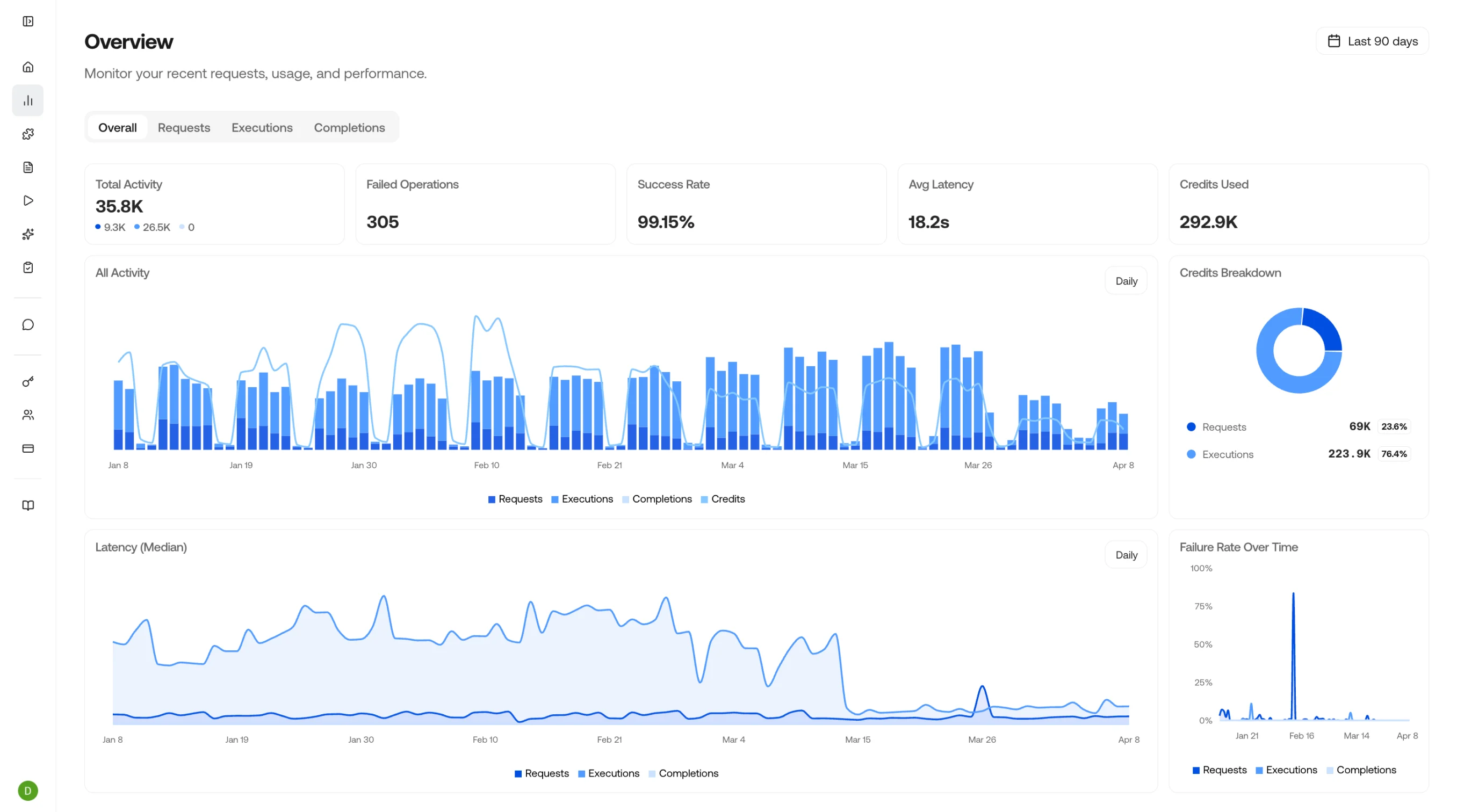

- Observe: Full observability across your visual AI usage. Monitor requests, track executions, review completions, and keep costs in check.

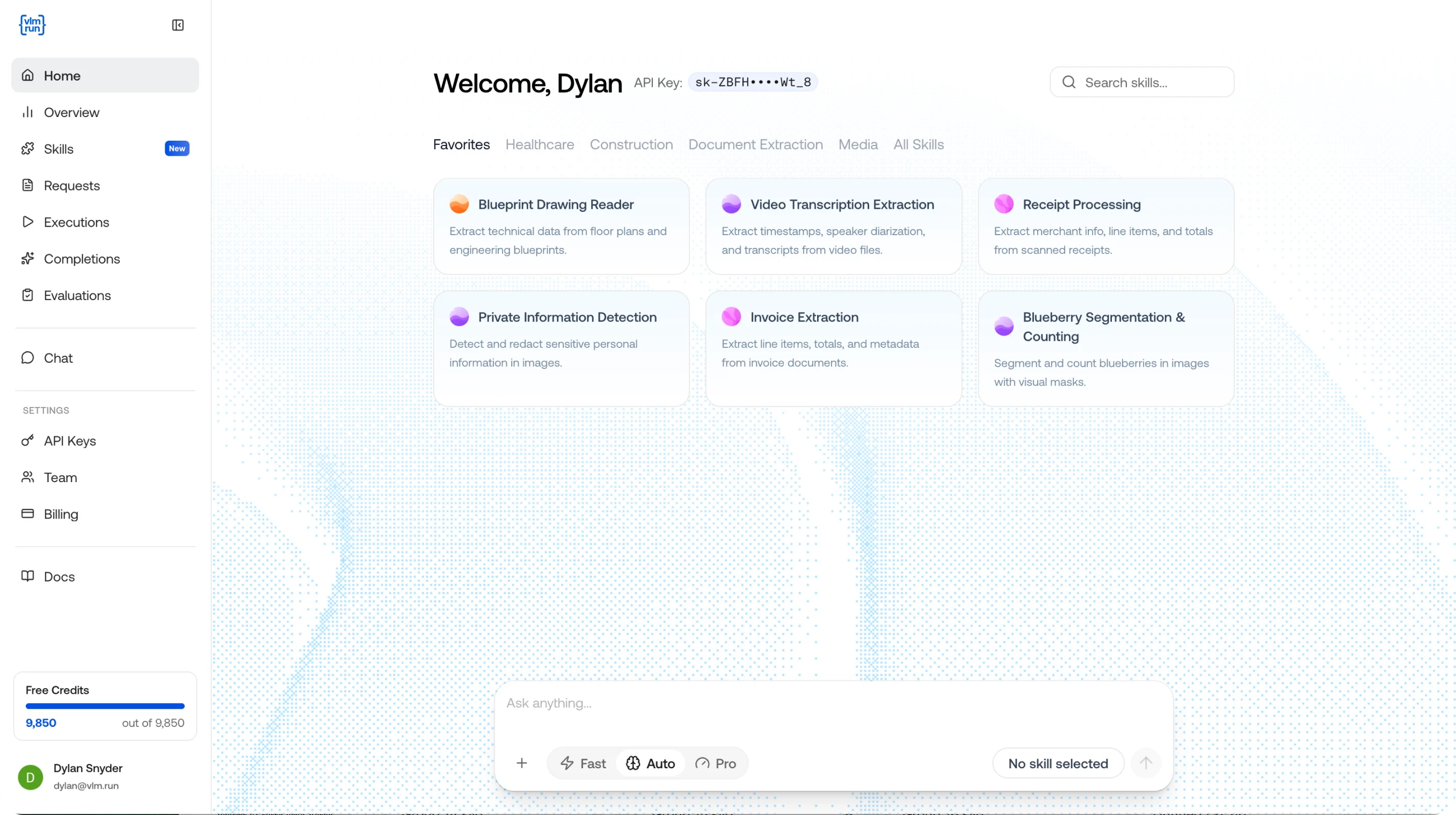

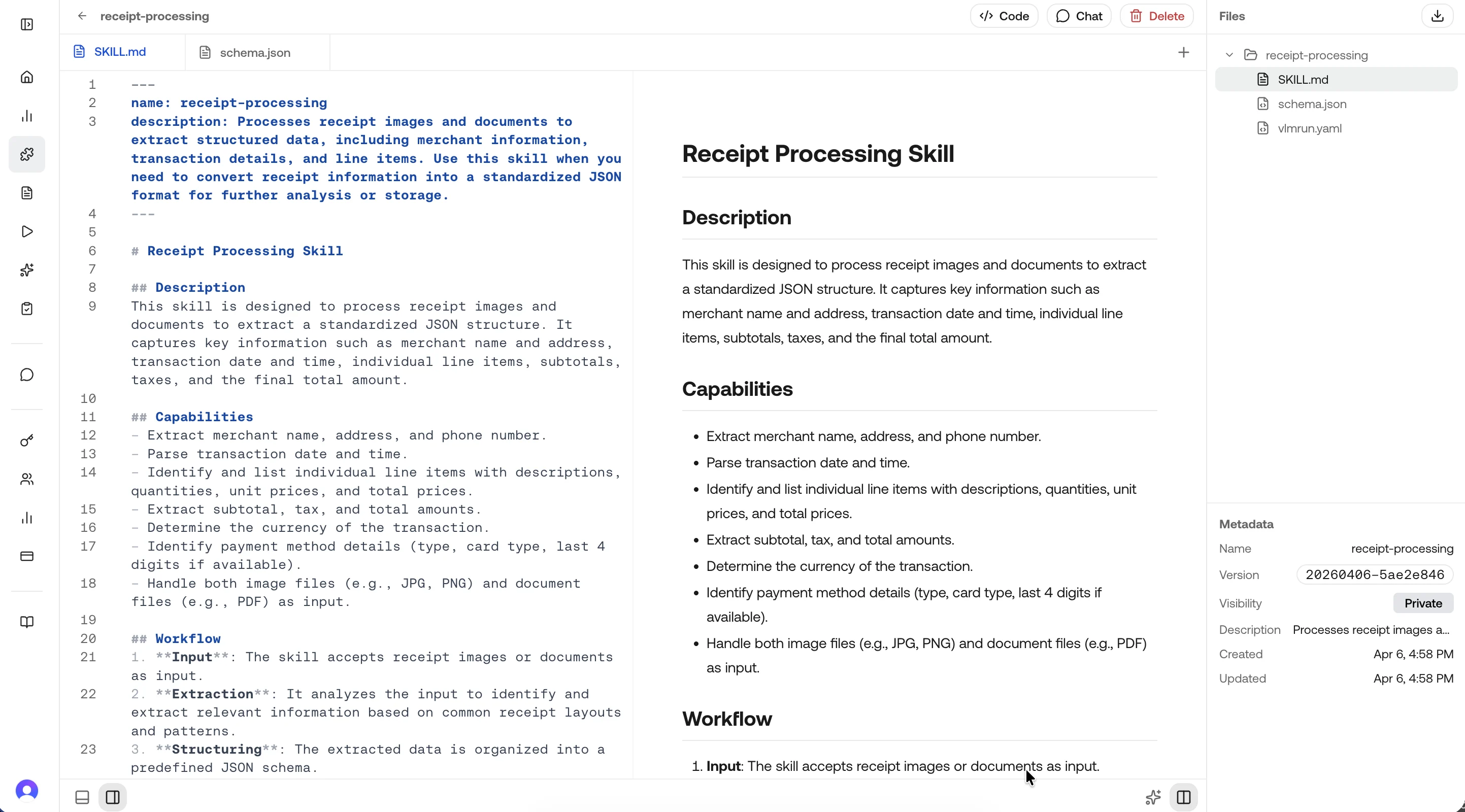

- Skills: Modular, reusable capabilities that tell the model what to extract and how to structure it. Create once, reference from any endpoint.

- Chat: The interactive playground for your visual agent. Attach images, PDFs, or videos and get structured responses in real time.

- Evaluations: Measure accuracy for skills, agents, and domains using feedback as ground truth, with dashboard runs and per-field metrics.

Explore the Platform

Home

Monitor requests, executions, and completions across the platform in one single pane of glass.

Skills

Create, edit, and manage reusable extraction skills for your team.

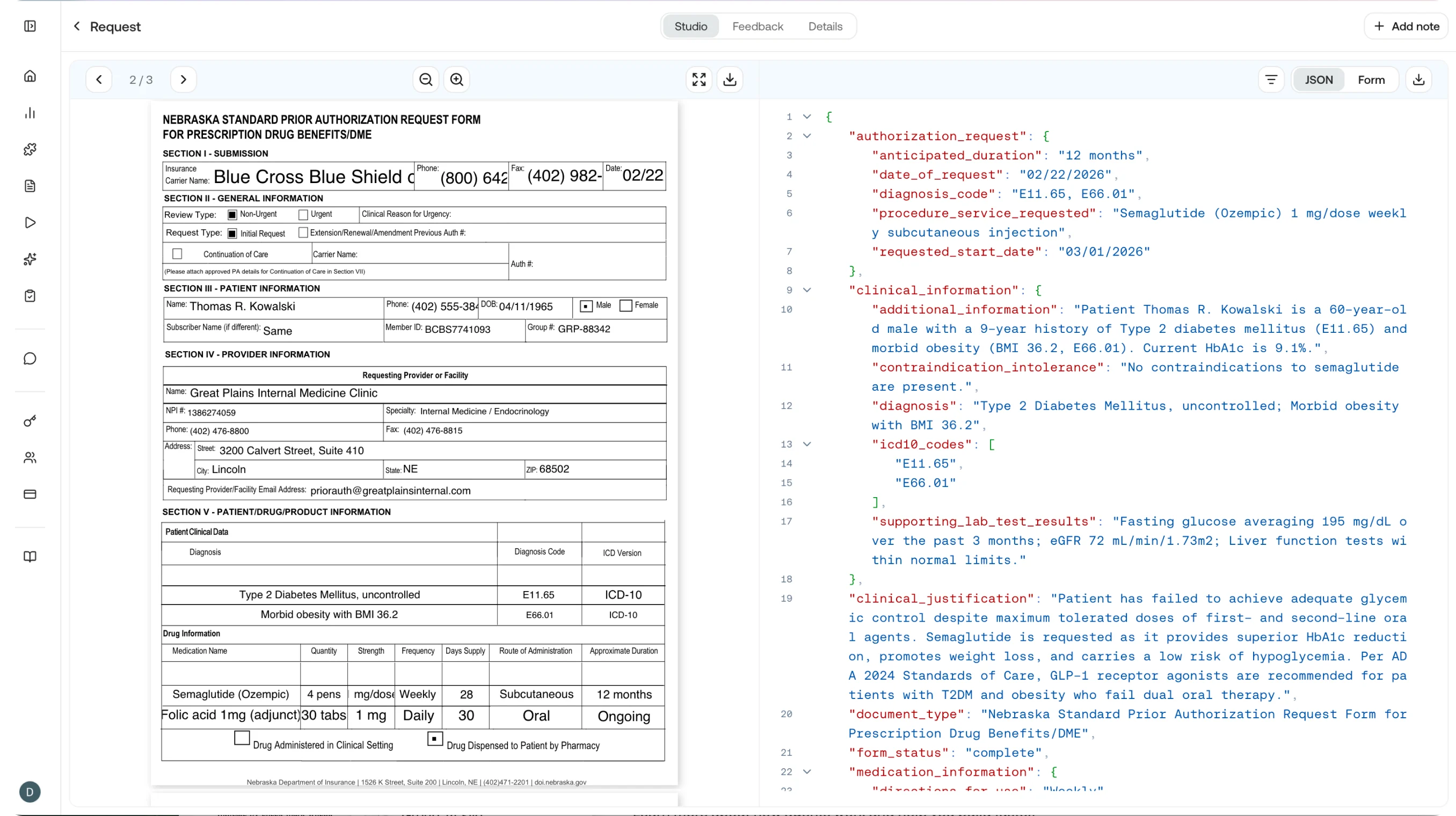

Requests

View and filter all API requests with status, duration, and cost.

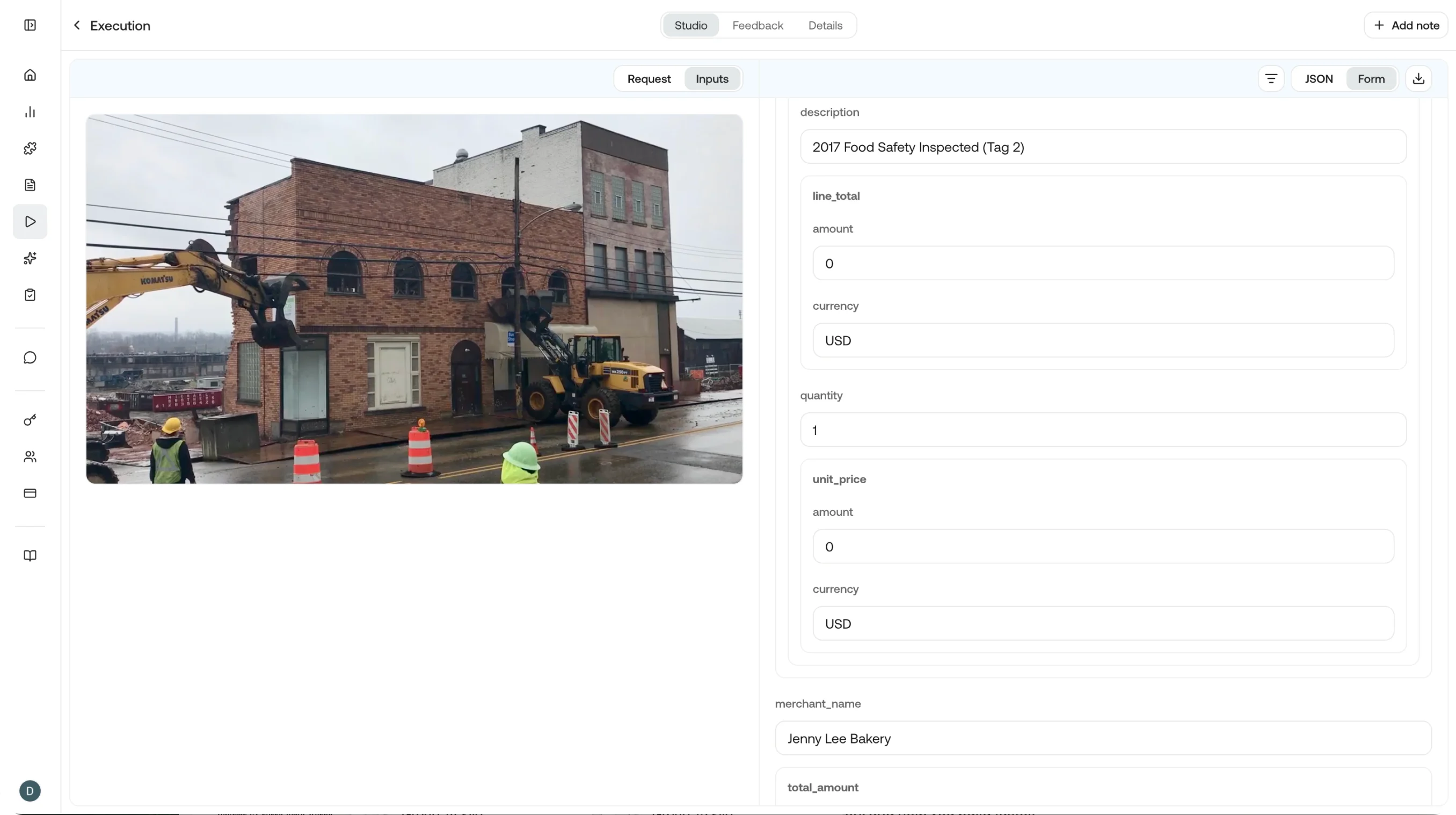

Executions

Track agent and skill executions end to end.

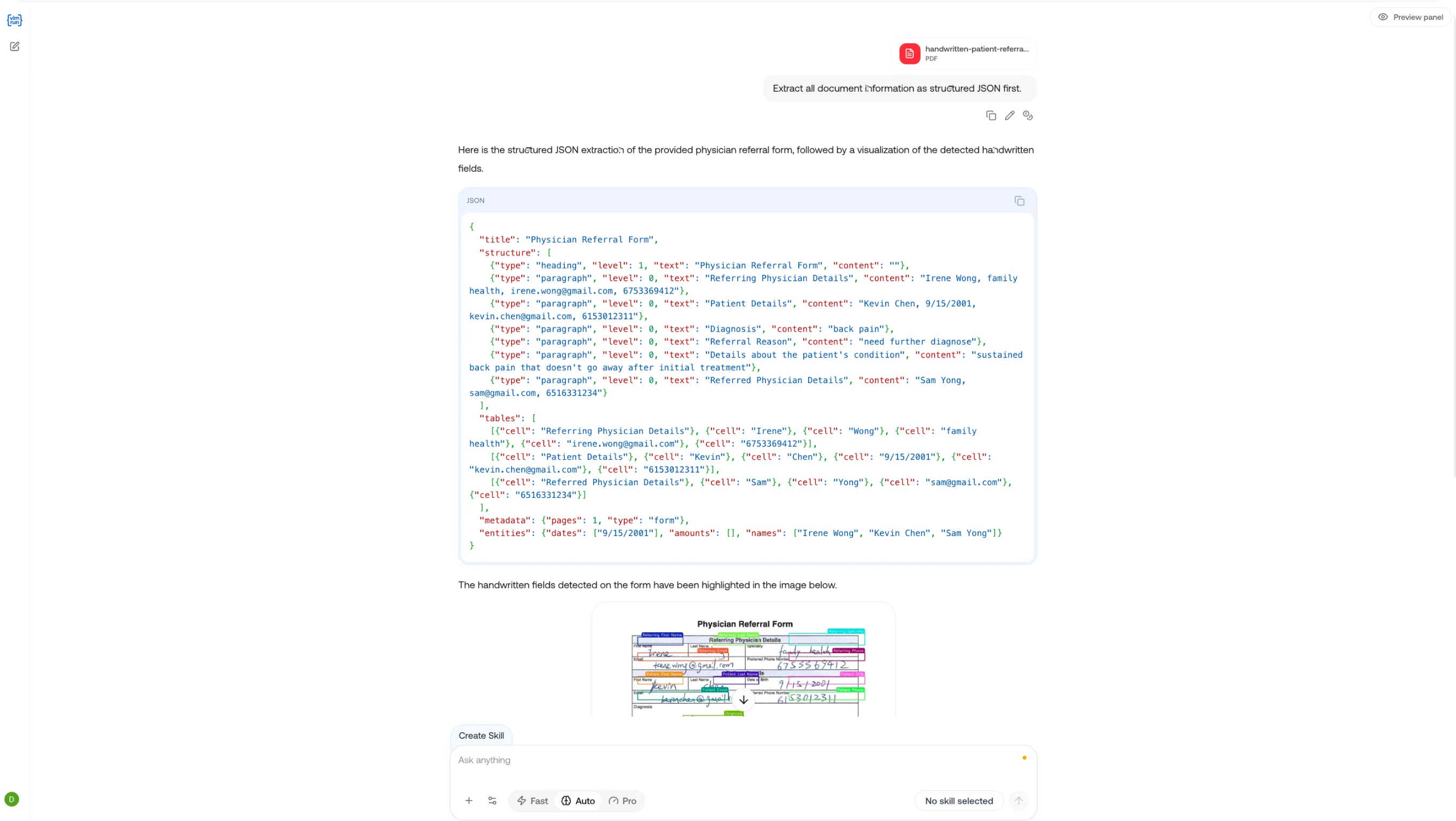

Chat

Send messages to Orion, attach files, and get structured visual responses.

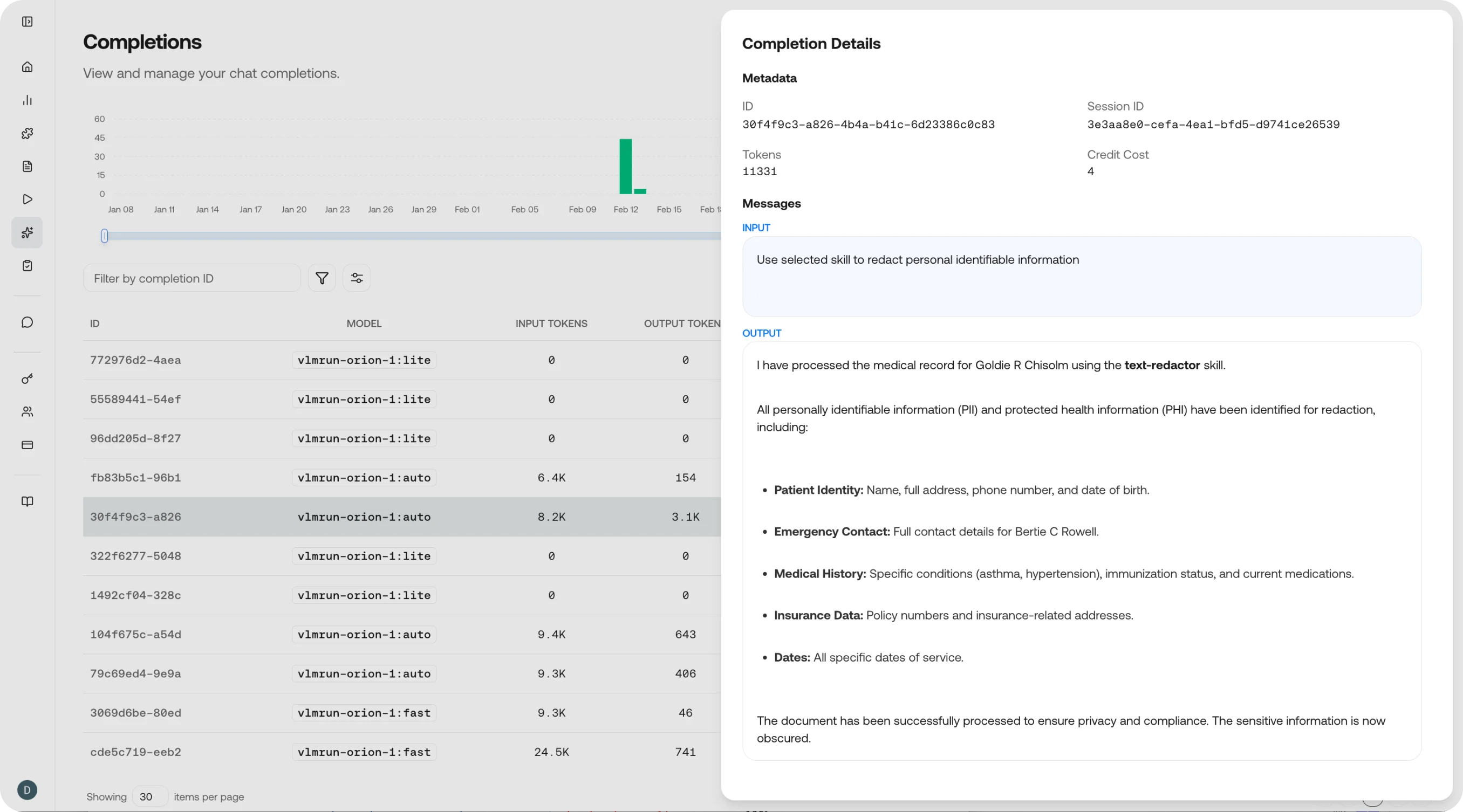

Completions

Browse model completions with token usage and output details.

Quick Links

Try Orion

Jump straight into the playground and chat with Orion for free.

Open Dashboard

Sign in to the VLM Run platform to manage your account.

API Docs

Integrate programmatically with the VLM Run REST API.

Skills Reference

Deep-dive into the skill specification and lifecycle.